AI Amplifies Corporate Cybersecurity Concerns

March 2, 2026

Artificial intelligence is reshaping how businesses operate. It's also reshaping how businesses get hacked.

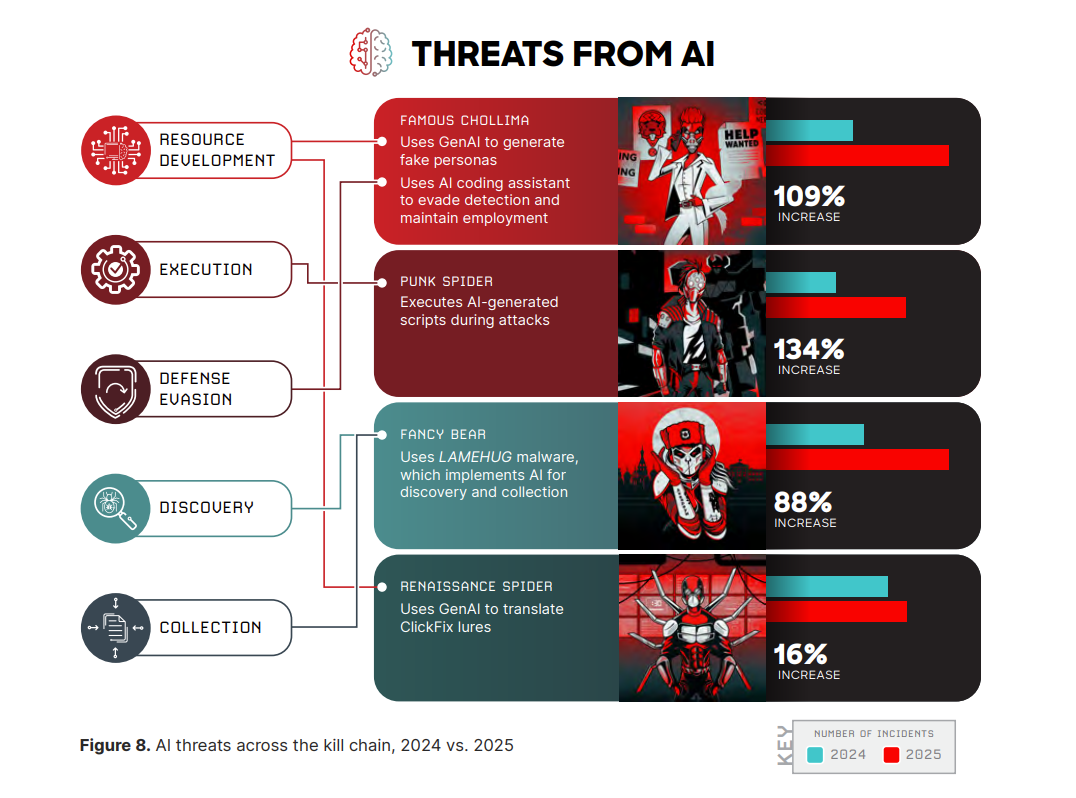

In 2026, artificial intelligence (AI) is a risk amplifier already changing the speed, scale, and credibility of cyberattacks. The most significant shift is how quickly attacks move from initial contact to real business impact.

Source: CrowdStrike 2026 Global Threat Report

AI Shrinking Time to Respond

Security teams have long measured success by how quickly they can detect and respond to threats. Artificial intelligence has compressed that window. Modern attacks increasingly unfold in minutes, not days, leaving little margin for slow approvals or informal processes.

Industry reporting reinforces this trend. According to recent threat intelligence, average breakout times continue to fall, with some intrusions moving laterally in under a minute. That pace fundamentally changes incident response expectations for leadership.

This is why relying solely on perimeter defenses or assuming an issue will be noticed in time is no longer a viable strategy.

AI-Driven Phishing Harder to Spot

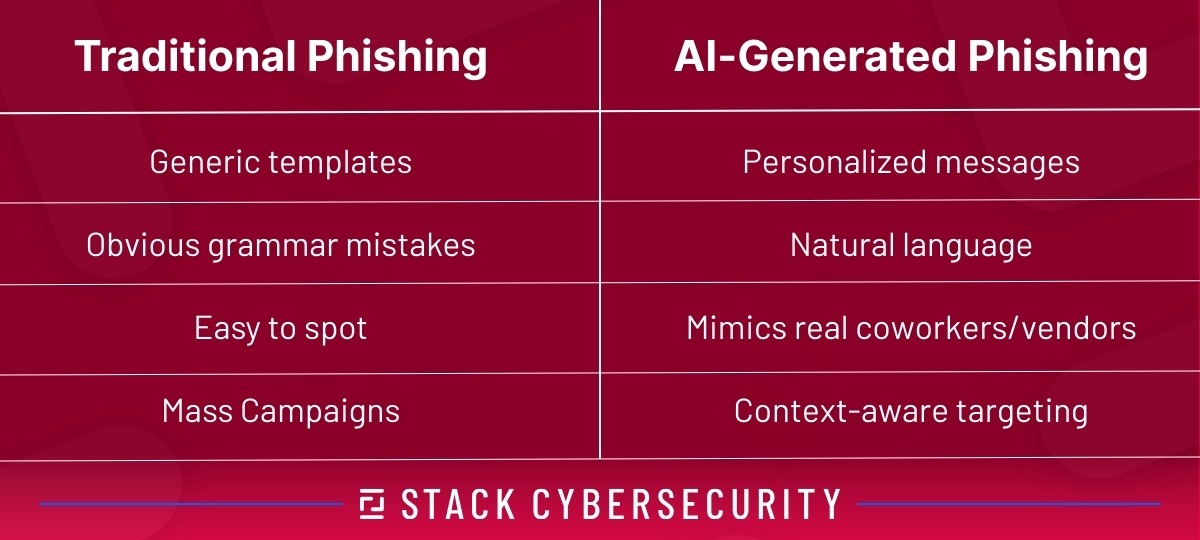

Phishing remains the most common initial access vector, but artificial intelligence has changed how convincing these messages appear. Instead of generic templates, hackers now generate context-aware emails that mirror real vendors, coworkers, and workflows.

STACK has explored this evolution in Phishing 2.0: How AI Made Cyber Attacks Smarter, More Dangerous. The key takeaway for business owners is simple: authenticity checks alone don't guarantee safety.

Credential theft increasingly succeeds because messages look routine, not suspicious. That makes layered identity controls essential.

If your team needs a refresher, Understanding Multi-Factor Authentication explains why multi-factor authentication (MFA) remains critical, even as attackers adapt.

Deepfakes Turn Trust Into Attack Surface

Deepfake technology is no longer limited to viral videos. In 2026, it's actively used for fraud, impersonation, and financial manipulation. Voice and video can no longer be treated as proof of identity. Check out our Deepfake Detection Guide for tips and tricks.

These attacks succeed when companies rely on informal approvals, urgency-based exceptions, or trust without verification. A convincing voice message or video call can override hesitation when controls are weak.

The implication for leadership is clear: verification processes must not depend solely on what employees see or hear.

Shadow AI Data Exposure Problem

Shadow AI refers to employees using AI tools for work without formal approval, policy guidance, or monitoring. This behavior is widespread because the productivity gains are real and the tools are easy to access.

Research highlighted in the National Cybersecurity Alliance and CybSafe Oh Behave! report shows a significant percentage of workers admit to sharing sensitive work information with AI tools without employer approval.

When policies are unclear, employees default to speed. That creates unmonitored data paths for customer information, internal documents, financial data, and intellectual property.

Consumer vs. Enterprise AI Matters

Not all AI platforms handle data the same way. Consumer AI tools are governed by general terms of service that often allow data collection, retention, and use for model improvement. A low monthly subscription doesn't fundamentally change those terms.

Enterprise AI platforms are different. Business tiers typically include contractual commitments around data segregation, retention limits, and restrictions on model training. These features do not guarantee legal privilege, but they materially reduce risk compared to consumer tools.

If employees are using consumer AI platforms to analyze incidents, evaluate compliance gaps, or discuss sensitive business decisions, those conversations may be discoverable.

AI Governance Lags Behind AI Adoption

Many organizations adopt AI tools faster than they establish governance. That gap becomes visible after incidents, during insurance claims, and in regulatory reviews.

STACK’s perspective on governance, risk, and compliance is outlined in Cyber GRC: Why Governance Matters. Governance connects technology decisions to accountability, documentation, and measurable outcomes.

If leadership can't clearly articulate who approves AI use, what data is permitted, and how usage is monitored, the company operates on assumption rather than control.

Financial, Contract Risk Increasing

AI-related incidents also carry financial consequences. Cyber insurers increasingly scrutinize security controls, training records, and policy enforcement after an incident.

As discussed in How to Prepare for Your Cyber Insurance Journey, gaps in basic controls or misrepresentation of practices can lead to denied claims.

For manufacturers and defense suppliers, the risk compounds. Using consumer or free AI tools to discuss controlled unclassified information (CUI) or contract performance can create compliance exposure alongside cybersecurity risk.

Recent developments are covered in Latest Cybersecurity Updates and Preparation Steps for Defense Suppliers.

What Business Leaders Should Do Now

Business leaders should treat AI as a data-handling workflow, not a novelty tool. That means understanding where AI is already in use, establishing acceptable use policies, strengthening verification workflows, training employees on modern impersonation tactics, and documenting governance decisions.

The goal is resilience. As outlined in Proactive Cybersecurity Return on Investment, companies that invest in prevention, visibility, and governance consistently recover faster and cheaper.

Leadership, Governance Issue

Artificial intelligence will continue to change how work gets done. It also creates records, and those records are subject to the same scrutiny as any other business document.

The companies that succeed in 2026 will be the ones that treat AI security as a leadership and governance issue, not an afterthought delegated to technology alone.

STACK Cybersecurity helps companies assess AI risk, establish governance frameworks, and align AI usage with cybersecurity, compliance, and contract requirements. Contact Us to implement AI solutions.